In Microsoft Word for Windows, it is not possible to extract embedded pages from a PDF document.

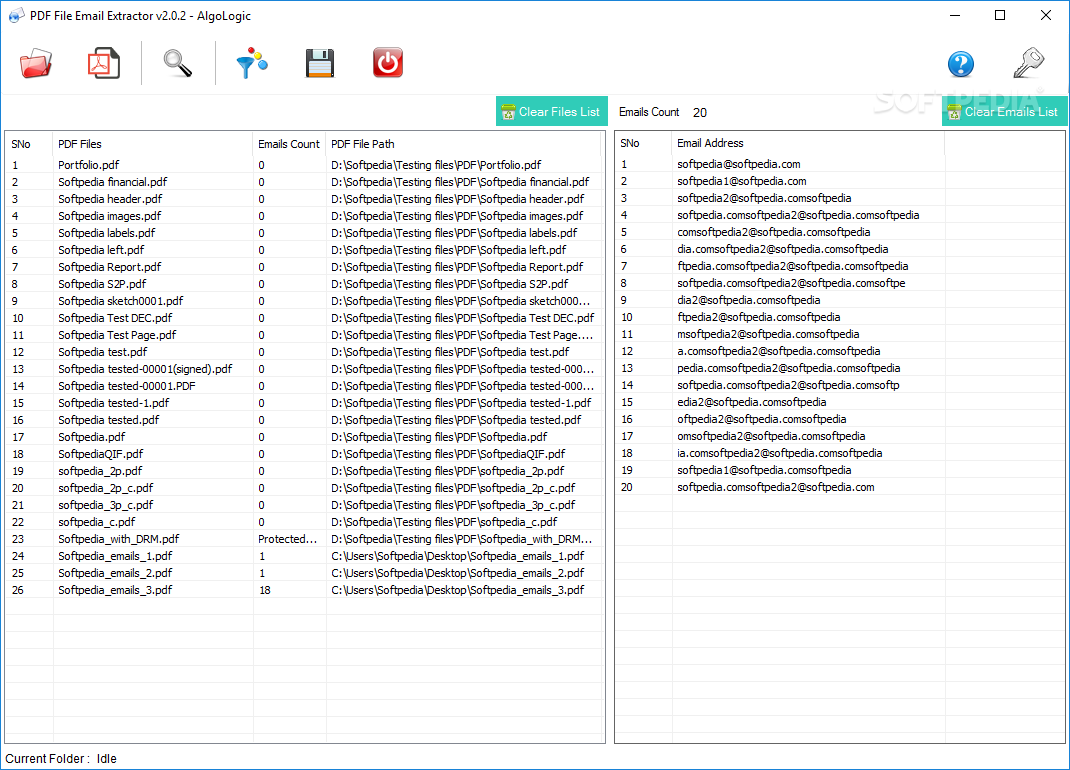

#Pdf image xtractor crack software#This software is capable enough to extract one or more PDF images from attachments and save them to your desktop. Attached images from a PDF is a sweepstake if you have a PDF attachment extractor. Many users have various kinds of content in their PDF file attachments, such as images. With this amazing feature, you can view and share PDF documents with great ease. It allows you to extract the entire contents of PDF documents, such as attached images or other documents. The bulk conversion feature of this software allows you to batch extract data from PDF attachments. He can extract embedded PDF from a Word document in a couple of clicks. This tool is a multifaceted utility that can extract multiple pages of Adobe PDF attachments in one processing. It extracts PDF attachments from small or large files and saves the extracted PDF documents in one folder. It has been programmed using advanced algorithms, so that it scans all the attachments of the PDF pages, and then extract them in the easiest way. PDF File Attachment Extractor software is a great tool that allows you to extract attached files from PDF documents. #Pdf image xtractor crack how to#Learn also: How to Make an Email Extractor in Python.BitRecover PDF Attachment Extractor Wizard 2.0 Crack īitRecover PDF Attachment Extractor Wizard If you want to extract tables or images from PDF, there are tutorials for that: But if you want to get URLs that are in text form, the second may help you do that! So to conclude, if you want to get URLs that are clickable, you may want to use the first method, which is preferrable. However, there is a problem with this method, as URLs may contain new lines ( \n), so you may want to allow that in url_regex expression. This method parses only URLs that are in text form (not clickable). This time we only extract 9 URLs from that same PDF file, now this doesn't mean the second method is not accurate. # extract all urls using the regular expressionįor match in re.finditer(url_regex, text): Now text is the target string we want to parse URLs, let's use re module to parse them: urls = Url_regex = r"https?:\/\/(www\.)?[ extract raw text from pdf #Pdf image xtractor crack install#First, let's get the text version of the PDF: import fitz # pip install PyMuPDF In this section, we will extract all raw text from our PDF file and then we use regular expressions to parse URLs. Method 2: Extracting URLs using Regular Expressions Related: How to Extract All Website Links in Python. I'm testing on this PDF file, but feel free to use any PDF file of your choice, just make sure it has some clickable links.Īfter running that code, I get this output: URL Found: Īwesome, we have successfully extracted 30 URLs from that PDF paper. Print(" Total URLs extracted:", len(urls)) In this technique, we will use pikepdf library to open a PDF file, iterate over all annotations of each page and see if there is a URL there: import pikepdf # pip3 install pikepdf To get started, let's install these libraries: pip3 install pikepdf PyMuPDF Method 1: Extracting URLs using Annotations We will be using two methods to get links from a particular PDF file, the first is extracting annotations, which are markups, notes and comments, that you can actually click on your regular PDF reader and redirects to your browser, whereas the second is extracting all raw text and using regular expressions to parse URLs. In this tutorial, we will use pikepdf and PyMuPDF libraries in Python to extract all links from PDF files. Do you want to extract the URLs that are in a specific PDF file ? If so, you're in the right place.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed